Forecasting with foundation models¶

Foundation models (FMs) have triggered a fundamental paradigm shift in time series forecasting, moving the field away from modelling for each dataset and towards generalised representation learning. Driven by the same architectural breakthroughs that power Large Language Models (LLMs), FMs bring zero-shot and in-context learning capabilities to temporal data.

In the context of forecasting, a foundation model is a massively scaled neural network (typically Transformer-based) that has been pre-trained on highly diverse, cross-domain datasets spanning finance, weather, web traffic, retail and more.

Models such as Amazon Chronos, Google TimesFM, Salesforce Moirai, and Soda-INRIA TabICL frame temporal forecasting as a sequence modelling problem and often treat time series values as discrete patches or tokens. Having already internalised the structural priors of millions of series during pre-training, they can instantly infer trends, seasonality and complex dynamics in completely unseen data, eliminating the need for any domain-specific weight updates.

Foundation Models vs. Machine Learning Models¶

Foundation models and traditional machine learning models approach forecasting in fundamentally different ways. Understanding these distinctions is crucial for knowing when and how to deploy each method.

Zero-Shot Prediction

Machine learning models require a training phase. You must fit the model on your historical target data (and exogenous variables) so the algorithm can learn the optimal weights and parameters for your specific time series. Foundation models are capable of zero-shot prediction. Because the model's parameters are already frozen from its massive pre-training phase, it can generate forecasts on your data immediately, without explicitly "learning" from your specific dataset first.

The Role of the fit Method

Machine learning models must be trained: calling .fit() optimizes the model's internal parameters to minimize an error metric on your data. Foundation models, by contrast, arrive pre-trained, their weights are frozen and never updated. Calling .fit() is therefore not a training step; it simply stores the recent historical context (last window of observations, frequency, and scaling factors) so that .predict() has everything it needs at inference time.

Context Window vs. Engineered Lags

Machine learning models rely on explicitly engineered features; they require creating a tabular dataset where past values are used as columns to predict the target. Foundation models rely on a context window. You pass a raw, sequential chunk of recent historical data (e.g., the last 512 observations) directly into the model at inference time. The attention mechanism inside the model automatically decides which past data points are most relevant.

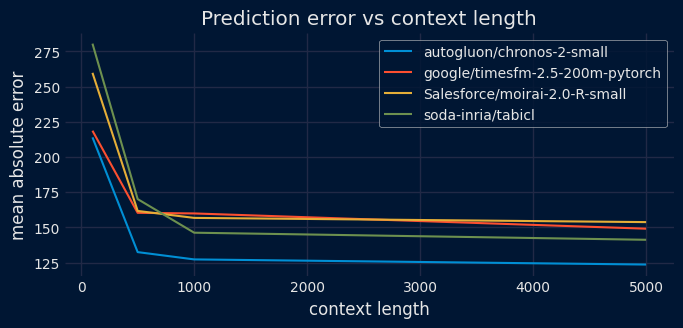

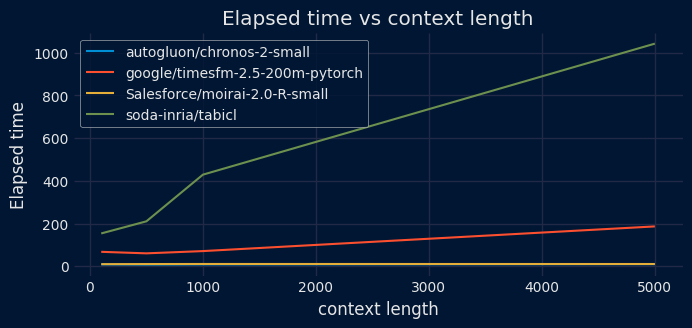

Impact of the context length¶

Because foundation forecasting models are highly generalized, they lack intrinsic knowledge of your specific dataset. To compensate, they rely on a context window, a specific period of recent historical data, to adapt to your unique scenario at inference time. This context acts as the model's short-term memory, allowing it to calculate the current trajectory of your data and identify whether the series is trending upward, accelerating, or flattening out.

The length of this context window is critical for capturing seasonality and recurring events. To accurately predict a pattern, such as a weekly sales spike or a yearly cycle, the model must actually observe that pattern within the provided history. For instance, if your data has a 365-day seasonality, providing 400 days of context allows the model to recognize and project the cycle, whereas a 30-day window would cause the model to miss the pattern entirely, resulting in a flat or inaccurate forecast.

However, increasing the context length to improve accuracy introduces a significant computational trade-off. Because most foundation models are built on Transformer architectures, the computational complexity of their attention mechanism often scales quadratically (

Ultimately, effectively utilizing foundation models requires carefully evaluating this trade-off. The best practice is to analyze the context size and select the shortest possible window that still achieves high predictive performance. Striking this balance ensures accurate, pattern-aware forecasts while preventing the unnecessary waste of computational resources.

Comparative charts of prediction error and elapsed time based on context length.

✎ Note

For more details about foundation models, visit Forecasting: Principles and Practice, the Pythonic Way.

Foundation Models in skforecast¶

Skforecast's integration is built on two layers. First, FoundationModel acts as a unified wrapper that adapts each model's native API (Chronos, TimesFM, Moirai, TabICL) behind a familiar scikit-learn interface (fit, predict, get_params). Second, ForecasterFoundation wraps that estimator to unlock the full skforecast ecosystem. It exposes the same interface as any other skforecast forecaster, meaning users can use backtesting, prediction intervals, and multi-series support with the exact same code.

Supported Foundation Models¶

| Chronos | TimesFM | Moirai | TabICL | |

|---|---|---|---|---|

| Provider | Amazon | Salesforce | Soda-Inria | |

| GitHub | chronos-forecasting | timesfm | uni2ts | tabicl |

| Documentation | Chronos models | TimesFM models | Moirai-R models | TabICL Docs |

| Available model IDs | amazon/chronos-2 autogluon/chronos-2-small autogluon/chronos-2-synth |

google/timesfm-2.5-200m-pytorch | Salesforce/moirai-2.0-R-small | soda-inria/tabicl |

| Backend | PyTorch | PyTorch | PyTorch | PyTorch |

| Forecasting type | Zero-shot | Zero-shot | Zero-shot | Zero-shot |

| Default context_length | 8192 | 512 | 2048 | 4096 |

| Max context_length | 8192 | 16384 | 2048 | 4096 |

| max_horizon | No hard limit, set via steps at predict time |

512 | No hard limit, set via steps at predict time |

No hard limit, set via steps at predict time |

| Point forecast | Median (0.5 quantile) | Mean (dedicated output array) | Median (0.5 quantile) | Mean (default, configurable to median) |

| Covariate support (exog) | Yes | No | No | Yes |

| cross_learning parameter | Yes (multi-series mode only) | No | No | No |

| Install command | pip install chronos-forecasting |

pip install git+https://github.com/google-research/timesfm.git |

pip install uni2ts |

pip install tabicl[forecast] |

💡 Tip

All four models run on the CPU. However, a CUDA GPU is recommended for faster inference, especially with long context windows. The MPS backend is also detected automatically by PyTorch and can benefit Apple Silicon users.

It is important to note that context length significantly impacts inference speed. Larger contexts provide the models with more information, but they increase processing time. Although these models boast massive context capacities, shorter contexts often achieve similar results much faster for most use cases.

Input Data Formats¶

ForecasterFoundation accepts several data formats for both the target series and exogenous variables.

Target Series (series)

The series parameter in the .fit() method supports both single-series and multi-series (global model) configurations.

| Mode | Allowed Data Type | Description |

|---|---|---|

| Single-Series | pd.Series |

A single time series with a named index. |

| Multi-Series (Wide) | pd.DataFrame |

Each column represents a separate time series. |

| Multi-Series (Long) | pd.DataFrame |

MultiIndex (Level 0: series ID, Level 1: DatetimeIndex). |

| Multi-Series (Dict) | dict[str, pd.Series] |

Keys are series identifiers, values are pandas Series. |

💡 Tip

While Long-format DataFrames are supported, they are converted to dictionaries internally. For best performance, pass a dict[str, pd.Series] directly.

Exogenous Variables (exog)

Exogenous variables must be aligned with the target series index. Currently, only Chronos and TabICL support covariates (see the Supported Foundation Models table). TimesFM and Moirai do not accept exogenous variables.\n

| Mode | Allowed Data Type | Description |

|---|---|---|

| Single-Series | pd.Series or pd.DataFrame |

Aligned to the target series index. |

| Multi-Series (Dict) | dict[str, pd.Series \| pd.DataFrame \| None] |

One entry per series. |

| Multi-Series (Broadcast) | pd.Series or pd.DataFrame |

Automatically applied to all series. |

| Multi-Series (Long) | pd.DataFrame |

MultiIndex (Level 0: series ID, Level 1: DatetimeIndex). |

Libraries and data¶

# Libraries

# ==============================================================================

import pandas as pd

import torch

import matplotlib.pyplot as plt

from skforecast.datasets import fetch_dataset

from skforecast.foundation import FoundationModel, ForecasterFoundation

from skforecast.model_selection import (

TimeSeriesFold,

backtesting_foundation

)

from skforecast.plot import set_dark_theme

color = '\033[1m\033[38;5;208m'

print(f"{color}torch version: {torch.__version__}")

print(f" Cuda available : {torch.cuda.is_available()}")

print(f" MPS available : {torch.backends.mps.is_available()}")

torch version: 2.6.0+cu124

Cuda available : True

MPS available : False

# Data download

# ==============================================================================

data = fetch_dataset(name='vic_electricity')

# Aggregating in 1H intervals

# ==============================================================================

# The Date column is eliminated so that it does not generate an error when aggregating.

data = data.drop(columns="Date")

data = (

data

.resample(rule="h", closed="left", label="right")

.agg({

"Demand": "mean",

"Temperature": "mean",

"Holiday": "mean",

})

)

data.head(3)

╭──────────────────────────── vic_electricity ─────────────────────────────╮ │ Description: │ │ Half-hourly electricity demand for Victoria, Australia │ │ │ │ Source: │ │ O'Hara-Wild M, Hyndman R, Wang E, Godahewa R (2022).tsibbledata: Diverse │ │ Datasets for 'tsibble'. https://tsibbledata.tidyverts.org/, │ │ https://github.com/tidyverts/tsibbledata/. │ │ https://tsibbledata.tidyverts.org/reference/vic_elec.html │ │ │ │ URL: │ │ https://raw.githubusercontent.com/skforecast/skforecast- │ │ datasets/main/data/vic_electricity.csv │ │ │ │ Shape: 52608 rows x 4 columns │ ╰──────────────────────────────────────────────────────────────────────────╯

| Demand | Temperature | Holiday | |

|---|---|---|---|

| Time | |||

| 2011-12-31 14:00:00 | 4323.095350 | 21.225 | 1.0 |

| 2011-12-31 15:00:00 | 3963.264688 | 20.625 | 1.0 |

| 2011-12-31 16:00:00 | 3950.913495 | 20.325 | 1.0 |

# Split data into train-test

# ==============================================================================

data = data.loc['2012-01-01 00:00:00':'2014-12-30 23:00:00', :].copy()

end_train = '2014-11-30 23:59:00'

data_train = data.loc[: end_train, :].copy()

data_test = data.loc[end_train:, :].copy()

print(f"Train dates: {data_train.index.min()} --- {data_train.index.max()} (n={len(data_train)})")

print(f"Test dates : {data_test.index.min()} --- {data_test.index.max()} (n={len(data_test)})")

Train dates: 2012-01-01 00:00:00 --- 2014-11-30 23:00:00 (n=25560) Test dates : 2014-12-01 00:00:00 --- 2014-12-30 23:00:00 (n=720)

Chronos¶

A ForecasterFoundation is created using Amazon's Chronos-2-small model.

# Create ForecasterFoundation

# ==============================================================================

estimator = FoundationModel(model_id="autogluon/chronos-2-small", context_length=500)

forecaster = ForecasterFoundation(estimator=estimator)

Each adapter accepts additional keyword arguments that control model-specific behavior (e.g., context_length, device_map, torch_dtype). These can be passed directly through the FoundationModel constructor.

For the full list of available parameters, see the API reference: ChronosAdapter, TimesFMAdapter, MoiraiAdapter, TabICLAdapter.

💡 Tip

While .fit() is used here to store the historical context and metadata, it is not strictly required. Foundation models can generate forecasts by passing the context directly to .predict() via the context parameter. However, calling .fit() first simplifies subsequent calls to .predict(), .predict_interval(), and .predict_quantiles().

# Train ForecasterFoundation

# ==============================================================================

forecaster.fit(

series = data_train["Demand"],

exog = data_train[["Temperature", "Holiday"]]

)

forecaster

ForecasterFoundation

General Information

- Model ID: autogluon/chronos-2-small

- Context length: 500

- Window size: 500

- Series names: Demand

- Exogenous included: True

- Creation date: 2026-04-24 17:23:38

- Last fit date: 2026-04-24 17:23:38

- Skforecast version: 0.22.0

- Python version: 3.12.13

- Forecaster id: None

Exogenous Variables

Temperature, Holiday

Training Information

- Context range: 'Demand': ['2012-01-01 00:00:00', '2014-11-30 23:00:00']

- Training index type: DatetimeIndex

- Training index frequency:

Model Parameters

- cross_learning: False

- context_length: 500

- device_map: auto

- torch_dtype: None

- predict_kwargs: None

Three methods can be used to predict the next predict(), predict_interval(), and predict_quantiles(). All these methods allow for passing context and context_exog to override the historical context used by the underlying model to generate predictions.

# Predictions: point forecast

# ==============================================================================

steps = 24

predictions = forecaster.predict(

steps = steps,

exog = data_test[["Temperature", "Holiday"]]

)

predictions.head(3)

| level | pred | |

|---|---|---|

| 2014-12-01 00:00:00 | Demand | 5527.678711 |

| 2014-12-01 01:00:00 | Demand | 5511.500977 |

| 2014-12-01 02:00:00 | Demand | 5457.791992 |

# Predictions: intervals

# ==============================================================================

predictions_intervals = forecaster.predict_interval(

steps = steps,

exog = data_test[["Temperature", "Holiday"]],

interval = [10, 90], # 80% prediction interval

)

predictions_intervals.head(3)

| level | pred | lower_bound | upper_bound | |

|---|---|---|---|---|

| 2014-12-01 00:00:00 | Demand | 5527.678711 | 5372.815918 | 5689.793457 |

| 2014-12-01 01:00:00 | Demand | 5511.500977 | 5318.045410 | 5733.492188 |

| 2014-12-01 02:00:00 | Demand | 5457.791992 | 5241.040527 | 5717.424805 |

Backtesting¶

Backtesting with foundation models works differently than with traditional machine learning forecasters. Since the model's weights are frozen and never updated, the concept of refitting does not apply. The refit and fixed_train_size arguments in TimeSeriesFold are ignored internally.

Instead, what changes across folds is the context window. As backtesting progresses, the amount of historical data available grows. At each fold, the model receives the most recent observations up to the fold boundary. This process has two phases: first, the context expands as more historical data becomes available with each fold; once the available history exceeds the model's context_length, the context stops growing and instead slides forward, always using the last context_length observations before the fold boundary.

Backtesting with expanding context

To learn more about backtesting, visit the backtesting user guide.

# Backtesting

# ==============================================================================

cv = TimeSeriesFold(

steps = 24,

initial_train_size = len(data.loc[:end_train]),

refit = False

)

metrics_chronos, backtest_predictions = backtesting_foundation(

forecaster = forecaster,

series = data['Demand'],

exog = data[["Temperature", "Holiday"]],

cv = cv,

metric = 'mean_absolute_error',

suppress_warnings = True

)

print("Backtest metrics")

display(metrics_chronos)

print("")

print("Backtest predictions")

backtest_predictions.head(4)

0%| | 0/30 [00:00<?, ?it/s]

Backtest metrics

| mean_absolute_error | |

|---|---|

| 0 | 171.266953 |

Backtest predictions

| level | fold | pred | |

|---|---|---|---|

| 2014-12-01 00:00:00 | Demand | 0 | 5527.678711 |

| 2014-12-01 01:00:00 | Demand | 0 | 5511.500977 |

| 2014-12-01 02:00:00 | Demand | 0 | 5457.791992 |

| 2014-12-01 03:00:00 | Demand | 0 | 5402.819336 |

# Plot predictions

# ==============================================================================

set_dark_theme()

fig, ax = plt.subplots(figsize=(7, 3))

data_test['Demand'].plot(ax=ax, label='test')

backtest_predictions['pred'].plot(ax=ax, label='predictions')

ax.legend();

Multiple series (global model)¶

The class ForecasterFoundation allows modeling and forecasting multiple series with a single model.

# Data

# ==============================================================================

data_multiseries = fetch_dataset(name="items_sales")

display(data_multiseries.head(3))

╭─────────────────────── items_sales ───────────────────────╮ │ Description: │ │ Simulated time series for the sales of 3 different items. │ │ │ │ Source: │ │ Simulated data. │ │ │ │ URL: │ │ https://raw.githubusercontent.com/skforecast/skforecast- │ │ datasets/main/data/simulated_items_sales.csv │ │ │ │ Shape: 1097 rows x 3 columns │ ╰───────────────────────────────────────────────────────────╯

| item_1 | item_2 | item_3 | |

|---|---|---|---|

| date | |||

| 2012-01-01 | 8.253175 | 21.047727 | 19.429739 |

| 2012-01-02 | 22.777826 | 26.578125 | 28.009863 |

| 2012-01-03 | 27.549099 | 31.751042 | 32.078922 |

# Split data into train-test

# ==============================================================================

end_train = '2014-07-15 23:59:00'

data_multiseries_train = data_multiseries.loc[:end_train, :]

data_multiseries_test = data_multiseries.loc[end_train:, :]

# Plot time series

# ==============================================================================

set_dark_theme()

fig, axes = plt.subplots(nrows=3, ncols=1, figsize=(7, 5), sharex=True)

for i, col in enumerate(data_multiseries.columns):

data_multiseries_train[col].plot(ax=axes[i], label='train')

data_multiseries_test[col].plot(ax=axes[i], label='test')

axes[i].set_title(col)

axes[i].set_ylabel('sales')

axes[i].set_xlabel('')

axes[i].legend(loc='upper left')

fig.tight_layout()

plt.show();

In this example, instead of calling fit(), the context is passed directly to the predict() method.

# Create and train ForecasterFoundation

# ==============================================================================

estimator = FoundationModel(model_id = "autogluon/chronos-2-small", context_length=500)

forecaster = ForecasterFoundation(estimator = estimator)

# fit() is optional; context is passed directly to predict()

# forecaster.fit(series=data_multiseries_train)

forecaster

ForecasterFoundation

General Information

- Model ID: autogluon/chronos-2-small

- Context length: 500

- Window size: 500

- Series names: None

- Exogenous included: False

- Creation date: 2026-04-24 17:23:58

- Last fit date: None

- Skforecast version: 0.22.0

- Python version: 3.12.13

- Forecaster id: None

Exogenous Variables

None

Training Information

- Context range: Not fitted

- Training index type: Not fitted

- Training index frequency: Not fitted

Model Parameters

- cross_learning: False

- context_length: 500

- device_map: auto

- torch_dtype: None

- predict_kwargs: None

# Predictions for all series (levels)

# ==============================================================================

steps = len(data_multiseries_test)

predictions_items = forecaster.predict(

steps = len(data_multiseries_test),

levels = None, # All levels are predicted

context = data_multiseries_train

)

predictions_items.head()

╭────────────────────────────────── InputTypeWarning ──────────────────────────────────╮ │ Passing a DataFrame (either wide or long format) as `series` requires additional │ │ internal transformations, which can increase computational time. It is recommended │ │ to use a dictionary of pandas Series instead. For more details, see: │ │ https://skforecast.org/latest/user_guides/independent-multi-time-series-forecasting. │ │ html#input-data │ │ │ │ Category : skforecast.exceptions.InputTypeWarning │ │ Location : │ │ c:\Users\Joaquin\miniconda3\envs\skforecast_22_py12\Lib\site-packages\skforecast\uti │ │ ls\utils.py:2799 │ │ Suppress : warnings.simplefilter('ignore', category=InputTypeWarning) │ ╰──────────────────────────────────────────────────────────────────────────────────────╯

| level | pred | |

|---|---|---|

| 2014-07-16 | item_1 | 25.523064 |

| 2014-07-16 | item_2 | 10.456666 |

| 2014-07-16 | item_3 | 11.862236 |

| 2014-07-17 | item_1 | 25.296782 |

| 2014-07-17 | item_2 | 10.701235 |

# Plot predictions

# ==============================================================================

set_dark_theme()

fig, axes = plt.subplots(nrows=3, ncols=1, figsize=(7, 5), sharex=True)

for i, col in enumerate(data_multiseries.columns):

data_multiseries_train[col].plot(ax=axes[i], label='train')

data_multiseries_test[col].plot(ax=axes[i], label='test')

predictions_items.query(f"level == '{col}'").plot(

ax=axes[i], label='predictions', color='white'

)

axes[i].set_title(col)

axes[i].set_ylabel('sales')

axes[i].set_xlabel('')

axes[i].legend(loc='upper left')

fig.tight_layout()

plt.show();

# Interval predictions for item_1 and item_2

# ==============================================================================

predictions_intervals = forecaster.predict_interval(

steps = 24,

levels = ['item_1', 'item_2'],

context = data_multiseries_train,

interval = [10, 90], # 80% prediction interval

)

predictions_intervals.head()

╭────────────────────────────────── InputTypeWarning ──────────────────────────────────╮ │ Passing a DataFrame (either wide or long format) as `series` requires additional │ │ internal transformations, which can increase computational time. It is recommended │ │ to use a dictionary of pandas Series instead. For more details, see: │ │ https://skforecast.org/latest/user_guides/independent-multi-time-series-forecasting. │ │ html#input-data │ │ │ │ Category : skforecast.exceptions.InputTypeWarning │ │ Location : │ │ c:\Users\Joaquin\miniconda3\envs\skforecast_22_py12\Lib\site-packages\skforecast\uti │ │ ls\utils.py:2799 │ │ Suppress : warnings.simplefilter('ignore', category=InputTypeWarning) │ ╰──────────────────────────────────────────────────────────────────────────────────────╯

| level | pred | lower_bound | upper_bound | |

|---|---|---|---|---|

| 2014-07-16 | item_1 | 25.464174 | 24.582005 | 26.430853 |

| 2014-07-16 | item_2 | 10.649370 | 8.679634 | 13.302807 |

| 2014-07-17 | item_1 | 25.270247 | 24.255964 | 26.327969 |

| 2014-07-17 | item_2 | 10.834244 | 8.717453 | 13.826941 |

| 2014-07-18 | item_1 | 25.175861 | 24.079086 | 26.286255 |

Other foundation models¶

The examples above use the Amazon Chronos model, but the same code structure applies to any other foundation model supported by skforecast. To use a different model, simply change the model_id parameter when instantiating the FoundationModel wrapper. The rest of the forecasting pipeline remains unchanged, allowing you to easily compare different foundation models on your dataset.

TimesFM¶

A ForecasterFoundation is created using Google's TimesFM-2.5-200m model.

# Create ForecasterFoundation

# ==============================================================================

estimator = FoundationModel(model_id="google/timesfm-2.5-200m-pytorch", context_length=500)

forecaster = ForecasterFoundation(estimator = estimator)

# Train ForecasterFoundation

# ==============================================================================

forecaster.fit(series=data_train["Demand"])

forecaster

ForecasterFoundation

General Information

- Model ID: google/timesfm-2.5-200m-pytorch

- Context length: 500

- Window size: 500

- Series names: Demand

- Exogenous included: False

- Creation date: 2026-04-24 17:24:01

- Last fit date: 2026-04-24 17:24:01

- Skforecast version: 0.22.0

- Python version: 3.12.13

- Forecaster id: None

Exogenous Variables

None

Training Information

- Context range: 'Demand': ['2012-01-01 00:00:00', '2014-11-30 23:00:00']

- Training index type: DatetimeIndex

- Training index frequency:

Model Parameters

- context_length: 500

- max_horizon: 512

- forecast_config_kwargs: None

# Predictions: point forecast

# ==============================================================================

steps = 24

predictions = forecaster.predict(steps=steps)

predictions.head(3)

| level | pred | |

|---|---|---|

| 2014-12-01 00:00:00 | Demand | 5658.415039 |

| 2014-12-01 01:00:00 | Demand | 5671.861816 |

| 2014-12-01 02:00:00 | Demand | 5747.938477 |

# Predictions: intervals

# ==============================================================================

predictions_intervals = forecaster.predict_interval(

steps = steps,

interval = [10, 90], # 80% prediction interval

)

predictions_intervals.head(3)

| level | pred | lower_bound | upper_bound | |

|---|---|---|---|---|

| 2014-12-01 00:00:00 | Demand | 5658.415039 | 5541.004883 | 5790.344238 |

| 2014-12-01 01:00:00 | Demand | 5671.861816 | 5470.189453 | 5899.113281 |

| 2014-12-01 02:00:00 | Demand | 5747.938477 | 5450.534180 | 6064.879883 |

# Backtesting

# ==============================================================================

cv = TimeSeriesFold(

steps = 24,

initial_train_size = len(data.loc[:end_train]),

refit = False

)

metrics_timesfm, backtest_predictions = backtesting_foundation(

forecaster = forecaster,

series = data['Demand'],

cv = cv,

metric = 'mean_absolute_error',

suppress_warnings = True

)

print("Backtest metrics")

display(metrics_timesfm)

print("")

print("Backtest predictions")

backtest_predictions.head(4)

0%| | 0/168 [00:00<?, ?it/s]

Backtest metrics

| mean_absolute_error | |

|---|---|

| 0 | 160.357018 |

Backtest predictions

| level | fold | pred | |

|---|---|---|---|

| 2014-07-16 00:00:00 | Demand | 0 | 6189.843750 |

| 2014-07-16 01:00:00 | Demand | 0 | 5988.112793 |

| 2014-07-16 02:00:00 | Demand | 0 | 5830.692383 |

| 2014-07-16 03:00:00 | Demand | 0 | 5696.288086 |

Moirai¶

A ForecasterFoundation is created using Salesforce's Moirai-2.0-R-small model.

# Create ForecasterFoundation

# ==============================================================================

estimator = FoundationModel(model_id="Salesforce/moirai-2.0-R-small", context_length=500)

forecaster = ForecasterFoundation(estimator=estimator)

# Train ForecasterFoundation

# ==============================================================================

forecaster.fit(series=data_train["Demand"])

forecaster

ForecasterFoundation

General Information

- Model ID: Salesforce/moirai-2.0-R-small

- Context length: 500

- Window size: 500

- Series names: Demand

- Exogenous included: False

- Creation date: 2026-04-24 17:24:41

- Last fit date: 2026-04-24 17:24:41

- Skforecast version: 0.22.0

- Python version: 3.12.13

- Forecaster id: None

Exogenous Variables

None

Training Information

- Context range: 'Demand': ['2012-01-01 00:00:00', '2014-11-30 23:00:00']

- Training index type: DatetimeIndex

- Training index frequency:

Model Parameters

- context_length: 500

- device: auto

# Predictions: point forecast

# ==============================================================================

steps = 24

predictions = forecaster.predict(steps=steps)

predictions.head(3)

| level | pred | |

|---|---|---|

| 2014-12-01 00:00:00 | Demand | 5731.725098 |

| 2014-12-01 01:00:00 | Demand | 5870.827148 |

| 2014-12-01 02:00:00 | Demand | 5959.207031 |

# Predictions: intervals

# ==============================================================================

predictions_intervals = forecaster.predict_interval(

steps = steps,

interval = [10, 90], # 80% prediction interval

)

predictions_intervals.head(3)

| level | pred | lower_bound | upper_bound | |

|---|---|---|---|---|

| 2014-12-01 00:00:00 | Demand | 5731.725098 | 5517.737793 | 5940.646484 |

| 2014-12-01 01:00:00 | Demand | 5870.827148 | 5548.743164 | 6176.801270 |

| 2014-12-01 02:00:00 | Demand | 5959.207031 | 5599.376953 | 6323.206055 |

# Backtesting

# ==============================================================================

cv = TimeSeriesFold(

steps = 24,

initial_train_size = len(data.loc[:end_train]),

refit = False

)

metrics_moirai, backtest_predictions = backtesting_foundation(

forecaster = forecaster,

series = data['Demand'],

cv = cv,

metric = 'mean_absolute_error',

suppress_warnings = True

)

print("Backtest metrics")

display(metrics_moirai)

print("")

print("Backtest predictions")

backtest_predictions.head(4)

0%| | 0/168 [00:00<?, ?it/s]

Backtest metrics

| mean_absolute_error | |

|---|---|

| 0 | 161.691106 |

Backtest predictions

| level | fold | pred | |

|---|---|---|---|

| 2014-07-16 00:00:00 | Demand | 0 | 6222.097656 |

| 2014-07-16 01:00:00 | Demand | 0 | 6114.366699 |

| 2014-07-16 02:00:00 | Demand | 0 | 5969.839844 |

| 2014-07-16 03:00:00 | Demand | 0 | 5920.479492 |

TabICL¶

A ForecasterFoundation is created using Soda-Inria's TabICL model.

# Create ForecasterFoundation

# ==============================================================================

estimator = FoundationModel(model_id="soda-inria/tabicl", context_length=500)

forecaster = ForecasterFoundation(estimator=estimator)

# Train ForecasterFoundation

# ==============================================================================

forecaster.fit(

series = data_train["Demand"],

exog = data_train[["Temperature", "Holiday"]]

)

forecaster

ForecasterFoundation

General Information

- Model ID: soda-inria/tabicl

- Context length: 500

- Window size: 500

- Series names: Demand

- Exogenous included: True

- Creation date: 2026-04-24 17:24:52

- Last fit date: 2026-04-24 17:24:52

- Skforecast version: 0.22.0

- Python version: 3.12.13

- Forecaster id: None

Exogenous Variables

Temperature, Holiday

Training Information

- Context range: 'Demand': ['2012-01-01 00:00:00', '2014-11-30 23:00:00']

- Training index type: DatetimeIndex

- Training index frequency:

Model Parameters

- context_length: 500

- point_estimate: mean

- tabicl_config: None

- temporal_features: None

# Predictions: point forecast

# ==============================================================================

steps = 24

predictions = forecaster.predict(

steps = steps,

exog = data_test[["Temperature", "Holiday"]]

)

predictions.head(3)

GPU 0:: 100%|██████████| 1/1 [00:01<00:00, 1.60s/it]

| level | pred | |

|---|---|---|

| 2014-12-01 00:00:00 | Demand | 5590.919922 |

| 2014-12-01 01:00:00 | Demand | 5662.487305 |

| 2014-12-01 02:00:00 | Demand | 5661.673828 |

# Predictions: intervals

# ==============================================================================

predictions_intervals = forecaster.predict_interval(

steps = steps,

exog = data_test[["Temperature", "Holiday"]],

interval = [10, 90], # 80% prediction interval

)

predictions_intervals.head(3)

GPU 0:: 100%|██████████| 1/1 [00:00<00:00, 1.07it/s]

| level | pred | lower_bound | upper_bound | |

|---|---|---|---|---|

| 2014-12-01 00:00:00 | Demand | 5617.177246 | 5159.889160 | 5952.955078 |

| 2014-12-01 01:00:00 | Demand | 5678.133301 | 5215.843750 | 6059.711914 |

| 2014-12-01 02:00:00 | Demand | 5677.455078 | 5131.911133 | 6149.854492 |

# Backtesting

# ==============================================================================

cv = TimeSeriesFold(

steps = 24,

initial_train_size = len(data.loc[:end_train]),

refit = False

)

metrics_tabicl, backtest_predictions = backtesting_foundation(

forecaster = forecaster,

series = data['Demand'],

exog = data[["Temperature", "Holiday"]],

cv = cv,

metric = 'mean_absolute_error',

suppress_warnings = True

)

print("Backtest metrics")

display(metrics_tabicl)

print("")

print("Backtest predictions")

backtest_predictions.head(4)

Model comparison¶

The following table summarizes the backtesting results (Mean Absolute Error) for the four foundation models on the same dataset.

# Comparison of backtesting metrics

# ==============================================================================

comparison = pd.DataFrame({

"Model": [

"Chronos-2 (small)*",

"TimesFM-2.5 (200m)",

"Moirai-2.0-R (small)",

"TabICLv2*"

],

"mean_absolute_error": [

metrics_chronos["mean_absolute_error"].iloc[0],

metrics_timesfm["mean_absolute_error"].iloc[0],

metrics_moirai["mean_absolute_error"].iloc[0],

metrics_tabicl["mean_absolute_error"].iloc[0]

],

})

comparison.style.highlight_min(

subset="mean_absolute_error", color="green"

).format(precision=4)

| Model | mean_absolute_error | |

|---|---|---|

| 0 | Chronos-2 (small)* | 171.2670 |

| 1 | TimesFM-2.5 (200m) | 160.3570 |

| 2 | Moirai-2.0-R (small) | 161.6911 |

| 3 | TabICLv2 | 170.1016 |

* Chronos-2 (small) and TabICLv2 are the only ones that allow to include exogenous features.

⚠ Warning: Data Leakage

These examples use a widely available public dataset for illustrative purposes. It is highly probable that the foundation models (Chronos, TimesFM, Moirai, TabICL) were exposed to these data points during their pre-training phase. As a result, the predictions may be more optimistic than what would be achieved in a real-world production environment with private or novel data.